The Boardroom That Never Knew

Picture this: a mid-sized e-commerce company has just poured $400,000 into a sophisticated Conversion Rate Optimization program. They’ve hired specialists. They’ve run A/B tests. They’ve redesigned landing pages, rewritten CTAs, and obsessed over heatmaps. Six months later, conversions are flat. The team is baffled.

What they never questioned, not once, was whether the data feeding all those decisions was actually true.

It wasn’t.

Buried inside their analytics platform were thousands of duplicate customer records, ghost sessions from bots, misconfigured tracking pixels, and segmentation logic applied to a CRM that hadn’t been audited in three years. Every hypothesis they tested, every “winning” variant they declared ,built on sand.

This isn’t a cautionary tale from the fringes of business. It’s a pattern playing out across industries, quietly, every single quarter.

The $12.9 Million Nobody Talks About

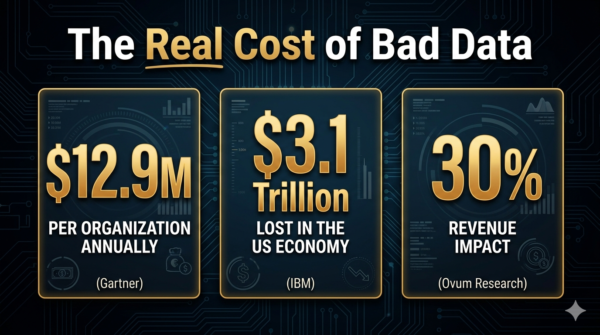

Here’s the number that should be on every executive’s wall: $12.9 million.

That is the average annual cost of poor data quality per organization, according to Gartner ,the figure that anchors years of research and cross-industry analysis. Some estimates push even higher. McKinsey found that bad data can trigger a 20% drop in productivity and a 30% increase in operational costs. IBM, zooming out further, calculated that U.S. businesses collectively bleed $3.1 trillion every year due to poor data quality.

And yet, remarkably, 59% of organizations do not measure or analyze data quality regularly. They are flying blind through a storm they helped create.

For CRO teams specifically, the damage is intimate and compounding. Conversion Rate Optimization is an evidence-based discipline. Every decision ,which page to test, which customer segment to target, which funnel stage to prioritize ,is downstream of data. When that data is corrupted, duplicated, or simply stale, the entire optimization engine stalls. Worse, it appears to be working, outputting confident-looking reports from fundamentally flawed inputs.

The Invisible Saboteur in Your Funnel

How does bad data actually kill CRO? Quietly, systematically, and in ways that are easy to rationalize away.

Scenario one: The phantom segment. A marketing team creates a personalization campaign targeting “high-intent returning visitors.” Their CRM, however, hasn’t been de-duplicated in 18 months. The segment is bloated with inactive accounts, test users, and bots. The campaign “converts” at 3.2% ,impressive on paper, meaningless in reality.

Scenario two: The broken test. An A/B test runs for six weeks. The winning variant beats the control by 14%. The team celebrates. But the tracking tag on the variant page was firing twice, inflating engagement metrics by double. There was no winner. There was only noise mistaken for signal.

This problem runs deeper than a misconfigured tag. As explored in Why Traditional A/B Testing Breaks in an AI-Driven Environment, even well-structured tests can produce confidently wrong results when the data feeding them is contaminated, a risk that compounds dramatically when visitor populations are shifting underneath you.

Scenario three: The decayed database. B2B contact data decays at rates between 22.5% and 70.3% annually ,meaning nearly three-quarters of a prospect database can become outdated within 12 months. Sales teams calling dead numbers. Email campaigns bouncing into the void. Retargeting spend chasing ghosts. Each wasted touchpoint is a tax levied by data neglect.

These aren’t edge cases. A study by Validity and Demand Metric found that nearly 50% of organizations rate their own CRM data quality as poor or neutral ,even while 92% of respondents call that same CRM “important” or “very important” for decision-making. The gap between dependency and quality is where the money disappears.

Why CRO Is Uniquely Vulnerable

Most business functions can absorb some data imprecision. Finance rounds figures. HR deals in approximations. But CRO is built on marginal gains ,the science of the small and the statistical. A 1% improvement in conversion rate can mean hundreds of thousands in annualized revenue. By the same logic, a 1% distortion in your data can send your entire optimization roadmap in the wrong direction.

The stakes scale with ambition. As CRO programs increasingly depend on personalization, AI-driven recommendations, and attribution modeling, data quality becomes load-bearing infrastructure. And with AI now reshaping the very nature of who arrives on your pages and why, the data quality bar has risen further still. Modern CRO Isn’t About More Traffic makes the case that intent-matching has become the new frontier of conversion, but you can’t match intent you’ve mismeasured.

Gartner now estimates that by 2028, 33% of enterprise applications will include agentic AI ,systems that make autonomous decisions based on data pipelines. Feed those systems garbage, and you don’t just waste a test. You automate bad decisions at machine speed.

A 2024 study by Precisely and Drexel University’s LeBow College of Business found that while 60% of organizations say AI is a key influence on their data programs, only 12% report their data is of sufficient quality for effective AI implementation. The aspiration and the infrastructure are dangerously misaligned.

The Fix: Start at the Foundation, Not the Feature

The instinct in CRO culture is to optimize the visible ,the button color, the headline, the checkout flow. But the highest-leverage intervention in most organizations isn’t a test. It’s a data audit.

Here is a pragmatic framework for fixing data quality before it kills your next campaign:

- Audit before you optimize. Before launching any CRO initiative, conduct a structured data profiling exercise. Identify missing values, duplicate records, bot traffic contamination, and tracking inconsistencies. This isn’t glamorous, but it’s the difference between building on bedrock and building on quicksand. A real-world example of this audit-first approach in action: the Acara case study shows how a structured website audit uncovered performance problems that were invisible to the team, until they measured what had previously gone unmeasured.

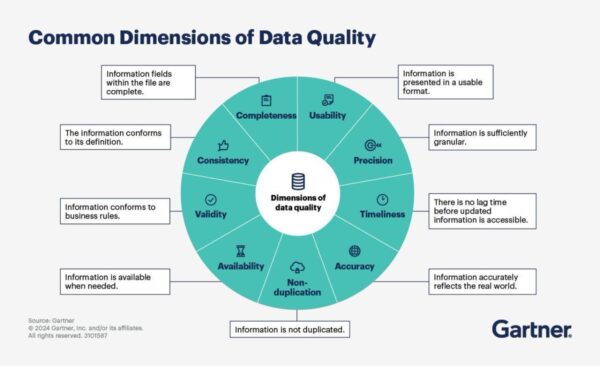

- Establish data governance ,not as policy, but as practice. Data governance tools can automate profiling, quality checks, cleansing, and monitoring. Effective governance defines who owns which data, enforces standardized formats, and creates continuous monitoring rather than one-time fixes. Think of it as the immune system of your analytics stack.

- De-duplicate and enrich your CRM systematically. Clean data drives measurable outcomes: research shows clean data produces 20% better campaign response rates, 15% higher close rates within six months, and 12% increased conversion rates. The ROI of cleansing is not hypothetical. It is documented.

- Build hygiene into the point of entry. The most expensive data problems don’t start in your database ,they start at the form, the integration, the manual input. Picklists, mandatory fields, and naming conventions prevent bad data from entering the system in the first place. Hygiene is proactive; cleansing is reactive. The goal is to need less of the latter.

- Make data quality a KPI, not an afterthought. Set measurable data quality benchmarks, completeness rates, accuracy scores, duplication percentages ,and monitor them with the same rigor as conversion rates. What gets measured gets managed.

The Quiet Competitive Advantage

The businesses that will win the next decade of digital competition aren’t necessarily the ones running the most sophisticated AI models or the most creative A/B tests. They are the ones who did the unglamorous work first: building data pipelines they can actually trust.

Clean data is not just an IT problem. It is a revenue problem, a strategy problem, and increasingly, an AI-readiness problem. The organizations that treat data quality as the prerequisite for CRO ,rather than a background concern ,will compound their optimization gains over time while competitors chase phantom conversions.

The $12.9 million question isn’t whether your data is perfect. It never will be. The question is whether you are systematically reducing the distance between what your data says and what is actually true.

That gap is where your conversions are hiding.

References

Esri / ArcNews. (2024, Summer). Data quality across the digital landscape.

Dataddo Blog. (2024, September 13). The cost of poor data quality: A comprehensive analysis.

Datafortune. (2026, January 22). 5 hidden costs of poor data quality in 2026.

Landbase. (2026, March). Data decay rate statistics: 20 critical facts every GTM leader should know in 2026.

Actian. (2025, August 14). The costly consequences of poor data quality.

Precisely & Drexel LeBow. (2024, September 18). New global research points to lack of data quality and governance as major obstacles to AI readiness [Press release].

WebFX. (2025). The conversion rate optimization trends defining 2025 & 2026: What works & what’s next.

Acceldata. (2026, January 31). The hidden cost of poor data quality governance: ADM turns risk into revenue.

Analytics8. (2025). Data governance tools & practices to improve data quality.