There is a trade-off happening quietly every time someone types a question into Google and gets an answer without ever clicking a link.

On the surface, it looks like efficiency. The user got what they needed, they moved on. No unnecessary page loads. No wandering through three different websites to find a single fact. Less browsing, you might think, should mean less energy, and therefore, a smaller carbon footprint.

But here is the part of the story that almost nobody is telling: the AI that generated that summary had to do a lot of work to get there. Work that draws electricity. Electricity that, in most parts of the world, still comes with a real carbon cost.

This is the tension sitting at the centre of AI-powered search in 2026. And it is a tension that any business serious about digital sustainability urgently needs to understand, not just at the surface level, but structurally.

Because the answers to this question will shape how you build your content strategy, how you report on your digital emissions, and how credibly you can claim a commitment to reducing your environmental footprint.

First, Let Us Revisit What We Already Know About Website Emissions

Before examining AI, it helps to have a firm foundation in how digital emissions actually work for conventional websites, because the picture is more counterintuitive than most people expect.

Our Acara Strategy case study showed that for a typical professional services website, the biggest source of emissions is not the server. It is the visitor’s device.

In Acara’s case, 74% of their total monthly carbon emissions came from visitors’ devices rendering pages, with only 9% from actually running the website infrastructure. Embodied emissions from hardware manufacturing accounted for the remaining 16%.

In the old web model: bad user experience equals wasted carbon. Low conversions equal a high carbon cost per meaningful interaction.

This is the baseline from which everything else in this article needs to be understood.

What AI Summaries Actually Change

Google’s AI Overviews, Perplexity, ChatGPT search, and similar tools have fundamentally changed the flow of information. Instead of clicking through to multiple websites, a growing number of users receive a synthesised answer directly within the search results page. For simple, factual questions, the kind that used to generate high-volume, high-bounce traffic to informational pages, AI summaries often mean the user never visits a website at all.

From a pure browsing-efficiency standpoint, this reduces certain forms of digital activity. Fewer page loads per question answered. Fewer sessions on ad-heavy, script-laden pages. Fewer visitors bouncing off content that was never the right fit for them.

If we follow the logic of the Acara case study directly, fewer low-intent visits should, in theory, mean less wasted device-side energy. Fewer people loading pages they were never going to act on.

But the question is: what replaces all that browsing?

A large language model processing every query and generating a coherent paragraph in response. And that is not a passive, lightweight activity.

The Energy Cost of an AI-Generated Answer

This is where the data becomes genuinely important, and where the picture gets complicated.

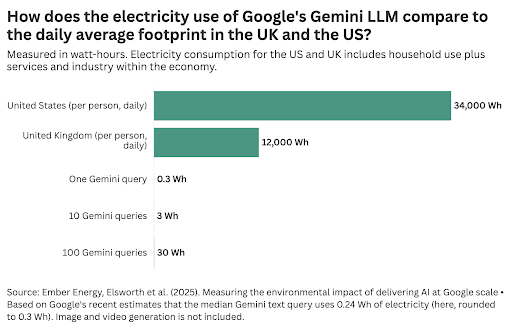

A single conventional Google search currently uses around 0.3 watt-hours (Wh) of electricity. A GPT-4o query, according to independent benchmarking research published in May 2025, uses approximately 0.42 Wh – roughly 40% more energy than a traditional search for a short query. When queries become longer and more complex, that gap widens significantly.

Earlier, rougher estimates suggested the gap was far larger, anywhere from 50 to 90 times more energy per query. The reason those early figures have been revised downward is meaningful: AI hardware and model efficiency have improved dramatically.

Google reported in 2025 that a typical Gemini query now consumes 0.24 Wh of electricity, representing a 33-fold reduction in energy use compared to the prior year, with carbon emissions per query falling by a factor of 44 over the same period.

These are genuine improvements. The researchers doing this work should be credited for it. But, and this is the part that matters for anyone trying to understand the system-level effect, efficiency gains at the per-query level do not automatically translate into lower total emissions when usage is simultaneously growing at scale.

According to MIT Technology Review’s in-depth 2025 investigation, data centres now account for 4.4% of all electricity consumption in the United States, and the carbon intensity of that electricity is roughly 48% higher than the US grid average, because AI data centres require constant power 24 hours a day, 7 days a week, and cannot rely on intermittent renewable sources like solar and wind in the way that other businesses can.

Google’s overall carbon footprint rose 48% between 2019 and 2024.

Microsoft’s grew 29% between 2020 and 2024.

Both companies attributed the increase directly to AI. These are the same organisations publishing efficiency improvements in their per-query numbers.

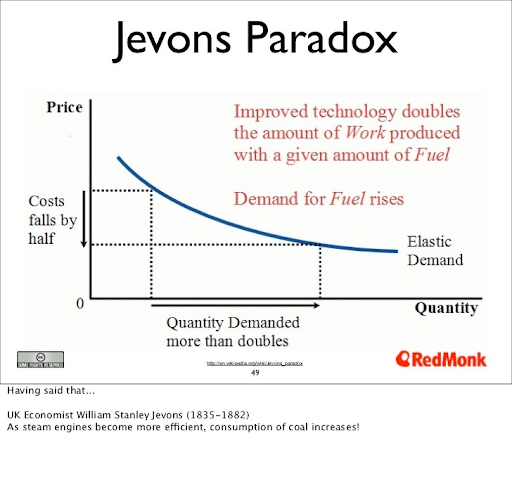

The Jevons Paradox, where improvements in efficiency lead to greater overall consumption because people use more of the thing that got cheaper, is operating at full force.

So the honest answer to “is an AI summary cheaper than a page visit?” is: for a single query in isolation, increasingly yes. For the system as a whole, still no.

The Central Tension: Decentralised Traffic vs. Centralised AI Power

This is the most important structural insight in this entire debate, and it is one that almost nobody in the digital sustainability conversation has framed clearly.

Traditional web browsing distributes its energy costs. Millions of individual devices and servers worldwide each carry a small piece of the load. It is inefficient in places, those device-side emissions from low-quality, high-bounce visits are real environmental waste. But the footprint is spread across the entire digital ecosystem.

AI summaries centralise that work. Instead of millions of devices doing lightweight rendering across hundreds of websites, a handful of enormous data centres perform intensive, continuous computation for every query. The individual visitor barely works, their device renders a paragraph of text. But the data centre that produced that paragraph worked very hard, and it is doing so for every single person running that same query, often without caching the response.

This centralisation also concentrates water consumption. AI data centres require substantial cooling, and this water often comes from municipal supplies or natural water bodies in regions that may already face scarcity.

A Nature Sustainability study published in 2025 found that AI server deployment in the United States alone could generate between 24 and 44 million tonnes of CO₂-equivalent annually between 2024 and 2030.

Neither model is clean. They fail in different ways. But the important shift is that AI has moved the primary source of digital emissions further upstream, away from websites, which businesses can measure and optimise, and toward data centres, over which most businesses have very little visibility or control.

What About the Traffic That Does Not Happen?

The optimistic case for AI summaries is not entirely without merit, and it deserves a fair hearing.

If AI summaries are genuinely replacing low-quality, high-bounce browsing sessions, the kind where someone clicks through to a page full of thin content and leaves within ten seconds, then there is a real argument that total wasted digital energy has decreased. Those unproductive visits were burning device-side energy for nothing. Removing them from the ecosystem is, on its face, an improvement.

Adobe’s 2025 analytics data found that users arriving via AI-referred links showed a 23% lower bounce rate, spent 41% more time on-site, and viewed around 12% more pages per visit compared to traditional organic visitors.

If that holds broadly, it suggests AI is filtering visits and sending only genuinely interested users through, higher quality traffic, lower volume, and potentially lower carbon cost per meaningful interaction from the website’s perspective.

Google has also argued that overall organic click volume has remained relatively stable year-on-year despite AI Overviews, and that the technology is expanding the total volume of questions people ask because users discover they can engage with more complex queries than before.

However, independent SEO research complicates that picture. An Ahrefs study found that the presence of an AI Overview correlated with a 58% lower average click-through rate for the top-ranking organic result as of late 2025.

Multiple analysts have noted that any overall traffic stability primarily benefits large platforms, Reddit, YouTube, major news organisations, while the long tail of independent publishers and niche content sites loses visits disproportionately.

If traffic is concentrating on fewer, larger platforms, the environmental story shifts again. Those large destinations may be more efficient per page view than the distributed web they are partly replacing, or they may simply be worse at converting visits into meaningful outcomes. The data is not yet settled.

The Measurement Gap Nobody Is Talking About

What is missing from almost every conversation about AI and digital sustainability is an honest accounting of the full system, end-to-end, not just at the node level.

We have reasonable estimates for how much energy a single AI query consumes. We have data on how AI Overviews are reducing click-through rates. We have evidence: from work like the Acara case study – about how device-side emissions dominate website carbon footprints.

What we do not have is a clean, comprehensive comparison. For a given piece of information, a recipe, a product comparison, an explanation of how something works, what is the total carbon cost of the user getting that information via a traditional search click, versus receiving it through an AI summary?

That calculation would need to account for the AI inference energy; the reduction in downstream page loads; the device-side energy for both scenarios; the network transmission in each case; and the upstream training costs amortised across usage.

As the MIT Technology Review investigation noted, most of this information is treated as a trade secret by the companies running the models. Without that transparency, any business claiming to have fully measured its digital carbon footprint, while ignoring the AI it uses daily is working with an incomplete picture.

This is not a criticism of individual businesses. It is a gap in the available infrastructure. But it is a gap that sustainability-conscious organisations need to acknowledge explicitly, rather than pretend it does not exist.

The Implications for Digital Marketers and Content Strategists

For practitioners managing digital content and marketing strategy, the implications of this shift cut in several concrete directions.

The first is that the nature of digital emissions has changed structurally. If you are measuring your website’s carbon footprint, as you should be, the conversation can no longer stop at page weight, hosting, and server location. The upstream cost of AI-generated content and AI-mediated discovery is now part of your emissions story, even if it does not show up in any tool you currently use.

The second is that quality-of-traffic is increasingly both a business and sustainability metric. If AI Overviews are sending you fewer but more engaged visitors, people who arrive with genuine intent, then your carbon cost per conversion may actually be improving, even as your total traffic falls. That is worth measuring explicitly and reporting transparently. It is also the insight that connects sustainable web strategy to the broader argument we made in our piece on bounce rate and digital waste.

The third is that your AI tool usage is now part of your emissions footprint in a way that was easy to ignore twelve months ago. The AI you use to generate content, summarise research, and optimise campaigns is drawing from the same energy-intensive infrastructure that is powering AI search summaries. Those emissions belong in your digital reporting, not in a separate “operations” bucket.

As regulatory frameworks like the EU’s Corporate Sustainability Reporting Directive (CSRD) and California’s climate disclosure laws extend to digital operations, the businesses that have already built measurement practices across their full digital stack will be far better positioned than those scrambling to retrofit them.

Six Actionable Steps You Can Take Right Now

Understanding the problem is not enough. Here is what organisations can do immediately to respond intelligently to this shift.

- Audit your AI tool usage alongside your website footprint. Most digital carbon audits stop at the website. They do not include the AI tools your team uses daily, the content generation platforms, the search assistants, the campaign optimisation tools. Start tracking these as a separate line item. Use benchmark figures: large model queries at approximately 0.002 kWh each, image generation at 0.003–0.005 kWh per image, video generation at 0.05 – 0.1 kWh per minute. Multiply by your monthly usage volumes and start building a baseline.

- Track your carbon cost per conversion, not just your total emissions. Total emissions are a blunt instrument. What matters for sustainability strategy is how much carbon you are generating per meaningful outcome – per lead, per sale, per download, per answered question. If your total traffic falls due to AI Overviews but your conversion rate improves, your carbon efficiency may actually be going up. Use the Everything Green Green Score tool to begin measuring this at the website level, then layer in your AI usage data.

- Redesign your content strategy around intent, not volume. If AI summaries are going to answer the simple, high-volume informational queries that used to drive traffic to your site, fighting that trend is probably futile. Instead, build content that AI systems cannot adequately replace: original research, case studies built on real proprietary data, practitioner perspectives that require lived experience, interactive tools that require a page visit to use. This is not just good for SEO in the post-AI landscape, it is good for your carbon efficiency, because it concentrates your audience footprint on visitors with genuine intent.

- Choose AI providers with strong renewable energy commitments. When selecting AI tools for your marketing stack, ask providers explicitly about their energy sources, data centre locations, and carbon reporting. Google Cloud operates several regions on 90%+ renewable energy. Microsoft Azure offers carbon-aware routing to lower-carbon data centres. Anthropic (the company behind Claude) purchases renewable energy credits matching their consumption. Where your AI workload runs matters enormously for its actual carbon impact.

- Use the right model for the task, always. The single most impactful operational change most teams can make is stopping the default to the most powerful available model for every task. For routine content drafts, social captions, email personalisation, and research summaries, smaller models deliver comparable results at 40-60% less energy. Establish clear internal guidelines: use large models for strategic, nuanced, or complex work. Use smaller, efficient models for everything routine. This reduces both your carbon footprint and your AI spend, the rare case where the environmental choice and the cost-saving choice are the same.

- Measure before you make claims. If your sustainability reporting mentions AI or digital operations, make sure the numbers behind it are real. Vague claims about “sustainable AI” or “responsible digital practices” without supporting data are increasingly scrutinised, by regulators, by enterprise buyers, and by audiences who have become fluent in greenwashing. Use platforms like Everything Green’s Green Score to produce verifiable, methodology-backed emissions figures you can actually stand behind in a report or a pitch.

The Honest Answer

Are AI summaries increasing or reducing digital emissions? At this point in 2026, the most accurate answer is: they are changing where emissions occur, not eliminating them.

The decentralised, messy energy cost of millions of browser sessions is being partially replaced by the centralised, intense energy cost of AI inference at scale.

Whether the net effect is positive or negative depends on how clean the data centres running those models are, how rapidly usage is growing relative to efficiency improvements, and whether the quality improvement in remaining web traffic compensates for the volume and variety that the distributed web has lost.

The broader potential of AI for climate work is real. A 2025 analysis estimated that AI could help reduce global emissions by 3.2 to 5.4 billion tonnes of CO₂-equivalent annually by 2035, if applied deliberately to policy design, energy grid optimisation, and industrial efficiency. But that potential requires deliberate choices, not simply deploying AI everywhere and assuming the efficiency gains will eventually outpace the consumption growth.

The Jevons Paradox tells us they probably will not, at least not automatically.

What this moment actually calls for is a generation of digital practitioners who are willing to measure honestly, report transparently, and make intentional choices about where and how they deploy technology. The businesses that build that capability now, across their full digital stack, including AI, will not only be better positioned for incoming regulation. They will be better positioned to lead the conversation that the rest of the industry is still figuring out how to have.

FAQ

Q: Does switching from a website to AI-generated answers actually save carbon at the system level?

Not necessarily, and this is the question most analyses get wrong by looking at only one part of the system. A single AI-generated summary may involve less device-side energy for the end user than loading a full website, but the AI data centre processing that query is working considerably harder than a conventional web server would. The net effect depends heavily on the energy source powering the data centre, the size of the model being used, and whether the result would have generated a high-bounce, low-value visit anyway.

Q: Should I still be optimising my website’s carbon footprint if AI is eating my traffic?

Yes, more than ever. Fewer visits means less total device-side energy, but the visits you do receive become higher-stakes from a carbon perspective: if you convert them well, your carbon cost per outcome improves. If your site is slow, confusing, or script-heavy and drives those remaining visitors away, you are burning energy for nothing in a more concentrated way.

Q: How do I include AI tool usage in my company’s sustainability reporting?

Start with a usage audit: catalogue every AI tool your team uses, estimate average daily queries or generations, and apply benchmark energy figures (approximately 0.002 kWh per large model query, 0.004 kWh per generated image). Multiply by your grid’s carbon intensity, the global average is around 475g CO₂ per kWh, but this varies significantly by region and provider. Platforms like Everything Green can help integrate this measurement into a broader digital carbon footprint report that aligns with frameworks like the Greenhouse Gas Protocol and the Ad Net Zero Global Media Sustainability Framework.

Q: Is the data on AI energy consumption reliable enough to use in public reporting?

Current estimates are accurate to approximately ±30% in most cases, sufficient for trend tracking and comparative decision-making, but not precise enough for highly specific regulatory claims without supplementary data. The transparency problem is real: most major AI providers treat energy consumption data as proprietary.

Google has been the most forthcoming, publishing per-query energy figures for Gemini. Until industry-wide disclosure standards exist, organisations should report ranges rather than point figures and be explicit about the methodology and assumptions behind their numbers.

Q: What is the Jevons Paradox, and why does it matter for AI sustainability?

The Jevons Paradox, named after 19th century economist William Stanley Jevons, describes the phenomenon where improvements in the efficiency of using a resource lead to greater overall consumption of that resource, not less, because the lower cost or effort encourages more use. It is directly relevant to AI: Google has reduced the energy cost of a Gemini query by 33x in a single year, yet its total carbon footprint rose 48% over the same five-year period, driven by explosive growth in AI usage. Per-query efficiency improvements are genuinely valuable, but they do not automatically translate to lower total emissions in a fast-growing system.

Q: What kind of content is most at risk of being replaced by AI summaries, and how does that change my carbon strategy?

Thin informational content, listicles, basic how-to guides, definition pages, and simple FAQs, is most at risk of being surfaced directly in AI Overviews without a click. These were also historically some of the highest-volume, lowest-conversion page types: exactly the kind of visits that, from a carbon perspective, generated the most device-side energy for the least meaningful outcome. Strategically, redirecting content investment toward proprietary research, original case studies, interactive tools, and deeply specific practitioner knowledge serves both your SEO resilience and your carbon efficiency, because it builds an audience of higher-intent visitors whose device energy actually goes toward something of value.

References

Luccioni, A. S., Jernite, Y., & Strubell, E. (2024). Power hungry processing: Watts driving the cost of AI deployment? ACM FAccT 2024.

arXiv. (2025). How hungry is AI? Benchmarking energy, water, and carbon footprint of LLM inference.

Vanderbauwhede, W. (2025). Estimating the increase in emissions caused by AI-augmented search. arXiv preprint arXiv:2407.16894.

Ren, S., et al. (2025). Environmental impact and net-zero pathways for sustainable artificial intelligence servers in the USA. Nature Sustainability.

MIT Technology Review. (2025). We did the math on AI’s energy footprint.

Ritchie, H. (2025). What’s the carbon footprint of using ChatGPT or Gemini? Sustainability by Numbers.

Computing.co.uk. (2025). Google publishes data on energy use of AI search queries.

Tutt, M. (2025). What is the environmental cost of Google’s AI Overview searches?

Patterson, D., et al. (2021). Carbon emissions and large neural network training. arXiv preprint arXiv:2104.10350.

Dodge, J., et al. (2022). Measuring the carbon intensity of AI in cloud instances. Proceedings of ACM FAccT 2022.

Ahrefs. (2025). AI Overviews reduce clicks — updated research.